Cold Email Case Study: 97% More Appointments After 1 A/B Test (w/ Templates)

Contents

This case study breaks down how we doubled the cold email results for a business broker (and longtime MailShake customer) with a single, focused A/B test.

You’ll see exactly how we helped Robert Allen of Acme Advisors & Brokers turn a handful of “negative replies” into a campaign consistently landing multiple appointments per day—powered by a smarter A/B testing approach.

Plus, we’ll walk through why our lead generation agency now relies on “qualitative A/B tests,” and how this shift helped us take one email from a 9.8% to an 18% reply rate by creating just one variation built on real feedback.

Campaign Stats:

4 emails

206 prospects

Open rate: 65%

30% reply rate

64 replies total

30+ meetings generated

The New Cold Email A/B Test

You’ve probably been told that you should A/B test your cold emails.

But after talking with data-scientists and marketing gurus like Brian Massey at Conversion Sciences…

…It turns out most of us (myself included) have been A/B testing cold emails wrong!

Gasp!

How To Run Cold Email A/B Tests Like a P.h.D

Here’s the biggest mistake cold emailers make when A/B testing:

“We look at reply rates instead of the actual replies.”

Yep, now, when I run A/B tests, I don’t care about reply rates. At least, not at first.

Why? According to data-scientists, reply rates are not a reliable metric until you get 100 replies per cold email variation. (Learn more on statistical significance here.)

Translation: If you end a test BEFORE you get 100 replies per variation, you won’t know (with confidence) which email performed better!

I’m no Ph.D, but that means if you get a 10% reply rate per variation, you’d need to send 2,000 emails before you can properly run an A/B test.

Do you see the problem here?

That kind of volume may work with landing page optimization or PPC ads… but if you have a VERY targeted list, you won’t have 2,000 people to contact per segment.

So what’s a sales team to do?

Run “Feedback Guided” A/B tests.

Turns out, analyzing your replies will help you improve results FAR more than checking reply rates.

This isn’t a new concept. (It’s just using qualitative data instead of quantitative data.) But it’s the best way to A/B test if you want to double your response rate quickly.

To explain how we did this — and how you can do the same – let’s look at the case study:

Case Study Overview

When we started working with Robert, he had a clear goal in mind: Generate 1 call per day.

Specifically, our goal was to bring them 1 scheduled call per day with a qualified business owner that was interested in having them sell their business.

To hit that goal, we’d need to generate 3 interested replies per day. (We could not assume that 100% of replies would actually show up to the call. So to be safe, 3 replies per day was our target.)

The “A” Cold Email

Reply Rate: 9.8%

Jack’s Notes: For this first variation, here were some of the targeting filters used:

Business Owners, in our target industry, with a company founded X years ago, in a city where my client had a buyer.

For this variation, we decided to be direct and ask them for a call regarding their business sale. And, of course making sure to add personalization following our CCQ framework.

Subject: numbers

{{firstname}}, {{CUSTOM INTRO SENTENCE — CCQ}}

Forgive me for being direct, but if I had a potential buyer in {{city}} interested in purchasing {{company}}, would you be open to hearing their offer?

If so, what does your calendar look like for a short call?

{{SIGNATURE}}

P.S. For background, my company helps entrepreneurs in the {{INDUSTRY}} space find the right buyer for their business when they’re finally ready to retire.

—-

Pretty good email, huh? That’s what I thought… Until the replies started coming in.

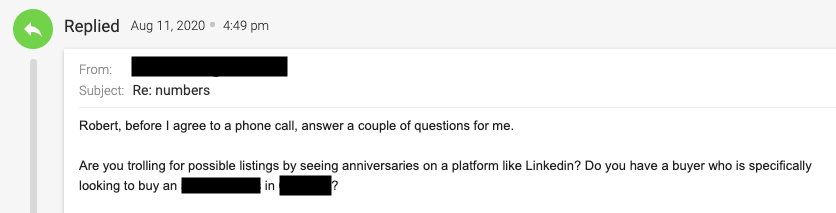

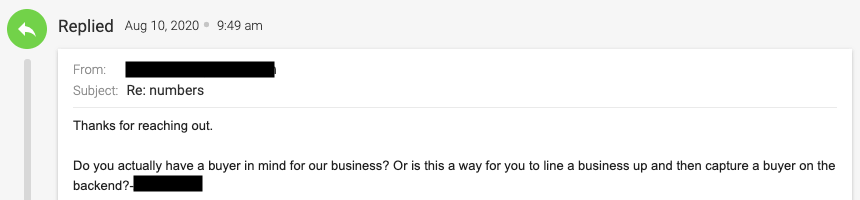

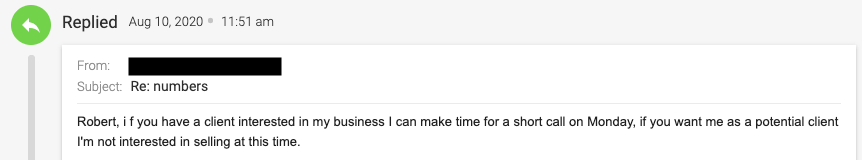

The “A” Cold Email Replies

That email got a 9.8% response rate.

Not terrible for a 1st start, but the replies were mostly negative… After analyzing the first 8 responses, 2 were positive (agreeing to a meeting) 3 were not interested. And 3 shared a common prospect reply pattern like this:

How We Wrote the “B” Variation: Addressing the #1 Problem

Do you sense a common theme in those replies?

Common Objection: They didn’t believe our client actually had a buyer in their city ready to make an offer.

So we created a test variation that could reduce the skepticism…

Takeaway: This was learned based on feedback! (Not reply rate.)

Fortunately, our client did have a buyer in those markets ready to purchase a business if it was a good fit.

So here’s what we did.

2 Changes That Doubled Our Reply Rate:

- We dropped the word “potential partner” from the copy. We learned this was causing skepticism, and our client HAD a partner ready to make an offer. So this word was a MAJOR problem area.

- We told them WHY we contacted them to make our pitch more believable.

- In the new copy, it mentioned that we were only targeting a certain kind of business that was at least X years old and had a strong reputation — based on their online reviews. So I included that (worded nicely of course) in the P.S. to let them know we specially targeted THEM.

Here’s what happened:

We went from a 9.8% response rate (mostly negative replies) to a 18% response rate with over 70% of replies marked as positive! #win

The “B” Cold Email Reply Rate: 18%

Subject: numbers

{{firstname}}, {{CUSTOM INTRO SENTENCE — CCQ}}

Forgive me for being direct, but I have a partner in {{city}} looking to acquire a company like {{company}}.

Are you open to talking numbers?

Best,

Robert

P.S. To be transparent, we’re looking for an {{INDUSTRY}} company in the area that’s been around for {{TIME PERIOD}} and has a strong reputation like yours. But if you’re not interested, you’re free to ignore this.

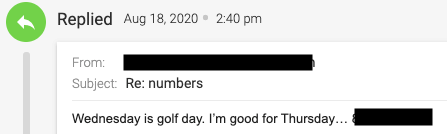

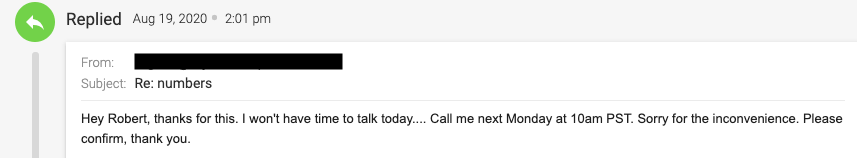

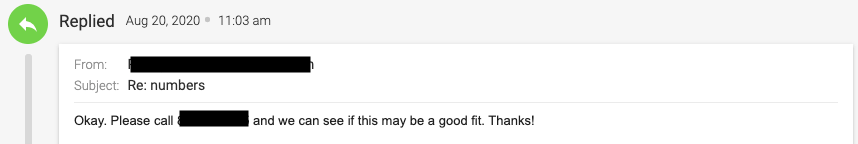

Results: Real Replies From This Variation

Conclusion

A few key takeaways for you:

- Yes—cold email still works. But in 2026, personalization isn’t optional if you want real results. In fact, it’s so effective that we brought on a full-time “Personalization Expert.” Test it for yourself and see what changes.

- Build your “B” variation by digging into your negative replies. It’s still the fastest, most reliable way to run A/B tests that actually move the needle. (Reply rates on their own can be misleading.)

And don’t overlook list building. This campaign would have fallen flat if we targeted the wrong audience. Study your current clients and customers, identify shared traits, and use those insights to build tightly focused, high-quality lists.